Suppose again that our random experiment is to perform a sequence of Bernoulli trials \(\bs{X} = (X_1, X_2, \ldots)\) with success parameter \(p \in (0, 1]\). In this section we will study the random variable \(N\) that gives the trial number of the first success and the random variable \( M \) that gives the number of failures before the first success.

Let \( N = \min\{n \in \N_+: X_n = 1\} \), the trial number of the first success, and let \( M = N - 1 \), the number of failures before the first success. The distribution of \( N \) is the geometric distribution on \( \N_+ \) and the distribution of \( M \) is the geometric distribution on \( \N \). In both cases, \( p \) is the success parameter of the distribution.

Since \( N \) and \( M \) differ by a constant, the properties of their distributions are very similar. Nonetheless, there are applications where it more natural to use one rather than the other, and in the literature, the term geometric distribution can refer to either. In this section, we will concentrate on the distribution of \( N \), pausing occasionally to summarize the corresponding results for \( M \). Throughout this text, we use the term geometric distribution \(\N_+\) and geometric distribution on \(\N\) to distinguish the two, as in .

\( N \) has probability density function \( f \) given by \(f(n) = p (1 - p)^{n-1}\) for \(n \in \N_+\).

Note first that \(\{N = n\} = \{X_1 = 0, \ldots, X_{n-1} = 0, X_n = 1\}\). By independence, the probability of this event is \((1 - p)^{n-1} p\).

To check that \(f\) is a valid PDF, we use standard results for geometric series \[\sum_{n=1}^\infty \P(N = n) = \sum_{n=1}^\infty (1 - p)^{n-1} p = \frac{p}{1 - (1 - p)} = 1\]

A priori, we might have thought it possible to have \(N = \infty\) with positive probability; that is, we might have thought that we could run Bernoulli trials forever without ever seeing a success. However, we now know this cannot happen when the success parameter \(p\) is positive.

The probability density function of \(M\) is given by \(\P(M = n) = p (1 - p)^n\) for \( n \in \N\).

In the negative binomial experiment, set \(k = 1\) to get the geometric distribution on \(\N_+\). Vary \(p\) with the scrollbar and note the shape and location of the probability density function. For selected values of \(p\), run the simulation 1000 times and compare the relative frequency function to the probability density function.

Note that the probability density functions of \( N \) and \( M \) are decreasing, and hence have modes at 1 and 0, respectively. The geometric form of the probability density functions also explains the term geometric distribution.

Suppose that \( T \) is a random variable taking values in \( \N_+ \). Recall that the ordinary distribution function of \( T \) is the function \( n \mapsto \P(T \le n) \). In this section, the complementary function \( n \mapsto \P(T \gt n) \) will play a fundamental role. We will refer to this function as the right distribution function of \( T \). Of course both functions completely determine the distribution of \( T \). Suppose again that \( N \) has the geometric distribution on \( \N_+ \) with success parameter \( p \in (0, 1] \).

\( N \) has right distribution function \( G \) given by \(G(n) = (1 - p)^n\) for \(n \in \N\).

For a proof using the Bernoulli trials formulation, note that \(\{N \gt n\} = \{X_1 = 0, \ldots, X_n = 0\}\). By independence, the probability of this event is \((1 - p)^n\).

For a direct proof, we use geometric series: \[ \P(N \gt n) = \sum_{k=n+1}^\infty \P(N = k) = \sum_{k=n+1}^\infty (1 - p)^{k-1} p = \frac{p (1 - p)^n}{1 - (1 - p)} = (1 - p)^n \]

From , it follows that the ordinary (left) distribution function of \(N\) is given by \[ F(n) = 1 - (1 - p)^n, \quad n \in \N \] We will now explore another characterization known as the memoryless property.

For \( m \in \N \), the conditional distribution of \(N - m\) given \(N \gt m\) is the same as the distribution of \(N\). That is, \[\P(N \gt n + m \mid N \gt m) = \P(N \gt n); \quad m, \, n \in \N\]

From and the definition of conditional probability, \[ \P(N \gt n + m \mid N \gt m) = \frac{\P(N \gt n + m)}{\P(N \gt m)} = \frac{(1 - p)^{n+m}}{(1 - p)^m} = (1 - p)^n = \P(N \gt n)\]

So if the first success has not occurred by trial number \(m\), then the remaining number of trials needed to achieve the first success has the same distribution as the trial number of the first success in a fresh sequence of Bernoulli trials. In short, Bernoulli trials have no memory. This fact has implications for a gambler betting on Bernoulli trials (such as in the casino games roulette or craps). No betting strategy based on observations of past outcomes of the trials can possibly help the gambler.

Conversely, if \(T\) is a random variable taking values in \(\N_+\) that satisfies the memoryless property in , then \(T\) has a geometric distribution.

Let \(G(n) = \P(T \gt n)\) for \(n \in \N\). The memoryless property and the definition of conditional probability imply that \(G(m + n) = G(m) G(n)\) for \(m, \; n \in \N\). Note that this is the law of exponents for \(G\). It follows that \(G(n) = G^n(1)\) for \(n \in \N\). Hence \(T\) has the geometric distribution with parameter \(p = 1 - G(1)\).

Suppose again that \(N\) is the trial number of the first success in a sequence of Bernoulli trials, so that \(N\) has the geometric distribution on \(\N_+\) with parameter \(p \in (0, 1]\). The mean and variance of \(N\) can be computed in several different ways.

\(\E(N) = \frac{1}{p}\)

Our first proof uses the probability density function in . Using the derivative of the geometric series, \begin{align} \E(N) &= \sum_{n=1}^\infty n p (1 - p)^{n-1} = p \sum_{n=1}^\infty n (1 - p)^{n-1} \\ &= p \sum_{n=1}^\infty - \frac{d}{dp}(1 - p)^n = - p \frac{d}{d p} \sum_{n=0}^\infty (1 - p)^n \\ &= -p \frac{d}{dp} \frac{1}{p} = -p \left(-\frac{1}{p^2}\right) = \frac{1}{p} \end{align}

For our second proof, recall that since \( N \) takes positive integer values, its expected value can be computed as the sum of the right distribution function. Hence \[ \E(N) = \sum_{n=0}^\infty \P(N \gt n) = \sum_{n=0}^\infty (1 - p)^n = \frac{1}{p} \]

For our third proof, we condition on the first trial \( X_1 \): If \( X_1 = 1 \) then \( N = 1 \) and hence \( \E(N \mid X_1 = 1) = 1 \). If \( X_1 = 0 \) (equivalently \( N \gt 1) \) then by the memoryless property, \( N - 1 \) has the same distribution as \( N \). Hence \( \E(N \mid X_1 = 0) = 1 + \E(N) \). In short \[ \E(N \mid X_1) = 1 + (1 - X_1) \E(N) \] It follows that \[ \E(N) = \E\left[\E(N \mid X_1)\right] = 1 + (1 - p) \E(N)\] Solving gives \( \E(N) = \frac{1}{p}\).

Exercise makes intuitive sense. In a sequence of Bernoulli trials with success parameter \( p \) we would expect

to wait \( 1/p \) trials for the first success.

\( \var(N) = \frac{1 - p}{p^2} \)

Our first proof uses the probability density function in tp compute \( \E\left[N(N - 1)\right] \). This is an example of a factorial moment, and we will compute the general factorial moments in . Using derivatives of the geometric series again, \begin{align} \E\left[N(N - 1)\right] & = \sum_{n=2}^\infty n (n - 1) p (1 - p)^{n-1} = p (1 - p) \sum_{n=2}^\infty n(n - 1) (1 - p)^{n-2} \\ & = p (1 - p) \frac{d^2}{d p^2} \sum_{n=0}^\infty (1 - p)^n = p (1 - p) \frac{d^2}{dp^2} \frac{1}{p} = p (1 - p) \frac{2}{p^3} = 2 \frac{1 - p}{p^2} \end{align} Since \( \E(N) = \frac{1}{p} \), it follows that \( \E\left(N^2\right) = \frac{2 - p}{p^2} \) and hence \( \var(N) = \frac{1 - p}{p^2} \)

Our second proof uses a conditioning argument. From the proof of , \[ \E(N \mid X_1) = 1 + (1 - X_1) \E(N) = 1 + \frac{1}{p} (1 - X_1) \] and by the same reasoning, \( \var(N \mid X_1) = (1 - X_1) \var(N) \). Hence \[ \var(N) = \var\left[\E(N \mid X_1)\right] + \E\left[\var(N \mid X_1)\right] = \frac{1}{p^2} p(1 - p) + (1 - p) \var(N) \] Solving gives \( \var(N) = \frac{1 - p}{p^2} \).

Note that \( \var(N) = 0 \) if \( p = 1 \), hardly surprising since \( N \) is deterministic (taking just the value 1) in this case. At the other extreme, \( \var(N) \uparrow \infty \) as \( p \downarrow 0 \).

In the negative binomial experiment, set \(k = 1\) to get the geometric distribution. Vary \(p\) with the scrollbar and note the location and size of the mean\(\pm\)standard deviation bar. For selected values of \(p\), run the simulation 1000 times and compare the sample mean and standard deviation to the distribution mean and standard deviation.

The probability generating function \( P \) of \(N\) is given by \[ P(t) = \E\left(t^N\right) = \frac{p t}{1 - (1 - p) t}, \quad \left|t\right| \lt \frac{1}{1 - p} \]

This result follows from yet another application of geometric series: \[ \E\left(t^N\right) = \sum_{n=1}^\infty t^n p (1 - p)^{n-1} = p t \sum_{n=1}^\infty \left[t (1 - p)\right]^{n-1} = \frac{p t}{1 - (1 - p) t}, \quad \left|(1 - p) t\right| \lt 1 \]

Recall again that for \( x \in \R \) and \( k \in \N \), the falling power of \( x \) of order \( k \) is \( x^{(k)} = x (x - 1) \cdots (x - k + 1) \). If \( X \) is a random variable, then \( \E\left[X^{(k)}\right] \) is the factorial moment of \( X \) of order \( k \).

The factorial moments of \(N\) are given by \[ \E\left[N^{(k)}\right] = k! \frac{(1 - p)^{k-1}}{p^k}, \quad k \in \N_+ \]

Our first proof uses the probability density function in . Using derivatives of geometric series again, \begin{align} \E\left[N^{(k)}\right] & = \sum_{n=k}^\infty n^{(k)} p (1 - p)^{n-1} = p (1 - p)^{k-1} \sum_{n=k}^\infty n^{(k)} (1 - p)^{n-k} \\ & = p (1 - p)^{k-1} (-1)^k \frac{d^k}{dp^k} \sum_{n=0}^\infty (1 - p)^n = p (1 - p)^{k-1} (-1)^k \frac{d^k}{dp^k} \frac{1}{p} = k! \frac{(1 - p)^{k-1}}{p^k} \end{align}

Our second proof uses the probability generating function \(P\) in Recall that \(\E\left[N^{(k)}\right] = P^{(k)}(1)\). So the result follows from standard calculus.

Suppose that \( p \in (0, 1) \). The skewness and kurtosis of \(N\) are

Note that the geometric distribution is always positively skewed. Moreover, \( \skw(N) \to \infty \) and \( \kur(N) \to \infty \) as \( p \uparrow 1 \).

Suppose now that \(M = N - 1\), so that \(M\) (the number of failures before the first success) has the geometric distribution on \(\N\). Then

Of course, the fact that the variance, skewness, and kurtosis are unchanged follows easily, since \(N\) and \(M\) differ by a constant.

Let \(F\) denote the distribution function of \(N\), so that \(F(n) = 1 - (1 - p)^n\) for \(n \in \N\). Recall that \(F^{-1}(r) = \min \{n \in \N_+: F(n) \ge r\}\) for \(r \in (0, 1)\) is the quantile function of \(N\).

The quantile function of \(N\) is \[ F^{-1}(r) = \left\lceil \frac{\ln(1 - r)}{\ln(1 - p)}\right\rceil, \quad r \in (0, 1) \]

Of course, the quantile function, like the probability density function and the distribution function, completely determines the distribution of \(N\). Moreover, we can compute the median and quartiles to get measures of center and spread.

The first quartile, the median (or second quartile), and the third quartile are

Open the quantile app, and select the geometric distribution and CDF view. Vary \( p \) and note the shape and location of the CDF/quantile function. For various values of \( p \), compute the median and the first and third quartiles.

Suppose that \(T\) is a random variable taking values in \(\N_+\), which we interpret as the first time that some event of interest occurs.

The function \( h \) given by \[ h(n) = \P(T = n \mid T \ge n) = \frac{\P(T = n)}{\P(T \ge n)}, \quad n \in \N_+ \] is the rate function of \(T\).

If \(T\) is interpreted as the (discrete) lifetime of a device, then \(h\) is a discrete version of the failure rate function studied in reliability theory. However, in our usual formulation of Bernoulli trials, the event of interest is success rather than failure (or death), so we will simply use the term rate function to avoid confusion. The constant rate property characterizes the geometric distribution. As usual, let \(N\) denote the trial number of the first success in a sequence of Bernoulli trials with success parameter \(p \in (0, 1)\), so that \(N\) has the geometric distribution on \(\N_+\) with parameter \(p\).

\(N\) has constant rate \(p\).

From the results above, \( \P(N = n) = p (1 - p)^{n-1} \) and \( \P(N \ge n) = \P(N \gt n - 1) = (1 - p)^{n-1} \), so \( \P(N = n) \big/ \P(N \ge n) = p \) for \( n \in \N_+ \).

Conversely, if \(T\) has constant rate \(p \in (0, 1)\) then \(T\) has the geometric distrbution on \(\N_+\) with success parameter \(p\).

Let \(H(n) = \P(T \ge n)\) for \(n \in \N_+\). From the constant rate property, \(\P(T = n) = p \, H(n)\) for \(n \in \N_+\). Next note that \(\P(T = n) = H(n) - H(n + 1)\) for \(n \in \N_+\). Thus, \(H\) satisfies the recurrence relation \(H(n + 1) = (1 - p) \, H(n)\) for \(n \in \N_+\). Also \(H\) satisfies the initial condition \(H(1) = 1\). Solving the recurrence relation gives \(H(n) = (1 - p)^{n-1}\) for \(n \in \N_+\).

Suppose again that \( \bs{X} = (X_1, X_2, \ldots) \) is a sequence of Bernoulli trials with success parameter \( p \in (0, 1) \). For \( n \in \N_+ \), recall that \(Y_n = \sum_{i=1}^n X_i\), the number of successes in the first \(n\) trials, has the binomial distribution with parameters \(n\) and \(p\). As before, \( N \) denotes the trial number of the first success.

Suppose that \( n \in \N_+ \). The conditional distribution of \(N\) given \(Y_n = 1\) is uniform on \(\{1, 2, \ldots, n\}\).

Our first proof is a sampling argument. We showed in the last section that given \( Y_n = k \), the trial numbers of the successes form a random sample of size \( k \) chosen without replacement from \( \{1, 2, \ldots, n\} \). This result is a simple corollary with \( k = 1 \)

For a direct proof, let \( j \in \{1, 2, \ldots, n\} \). Then \[ \P(N = j \mid Y_n = 1) = \frac{\P(N = j, Y_n = 1)}{\P(Y_n = 1)} = \frac{\P\left(Y_{j-1} = 0, X_j = 1, Y_{n} - Y_j = 0\right)}{\P(Y_n = 1)} \] In words, the events in the numerator of the last fraction are that there are no successes in the first \( j - 1 \) trials, a success on trial \( j \), and no successes in trials \( j + 1 \) to \( n \). These events are independent so \[ \P(N = j \mid Y_n = 1) = \frac{(1 - p)^{j-1} p (1 - p)^{n-j}}{n p (1 - p)^{n - 1}} = \frac{1}{n}\]

Note that the conditional distribution does not depend on the success parameter \(p\). If we know that there is exactly one success in the first \(n\) trials, then the trial number of that success is equally likely to be any of the \(n\) possibilities. Another connection between the geometric distribution and the uniform distribution is given for the alternating coin tossing game in : the conditional distribution of \( N \) given \( N \le n \) converges to the uniform distribution on \( \{1, 2, \ldots, n\} \) as \( p \downarrow 0 \).

The Poisson process on \( [0, \infty) \), named for Simeon Poisson, is a model for random points in continuous time. There are many deep and interesting connections between the Bernoulli trials process (which can be thought of as a model for random points in discrete time) and the Poisson process. These connections are explored in detail in the chapter referenced above. In this section we just give the most famous and important result—the convergence of the geometric distribution to the exponential distribution. The geometric distribution, as we know, governs the time of the first random point

in the Bernoulli trials process, while the exponential distribution governs the time of the first random point in the Poisson process.

The exponential distribution with rate parameter \( r \in (0, \infty) \) has distribution function \( F(x) = 1 - e^{-r x} \) for \( x \in [0, \infty) \). The mean of the exponential distribution is \( 1 / r \) and the variance is \( 1 / r^2 \). In addition, the moment generating function is \( s \mapsto \frac{1}{s - r} \) for \( s \gt r \).

For \( n \in \N_+ \), suppose that \( U_n \) has the geometric distribution on \( \N_+ \) with success parameter \( p_n \in (0, 1) \), where \( n p_n \to r \gt 0 \) as \( n \to \infty \). Then the distribution of \( U_n / n \) converges to the exponential distribution with parameter \( r \) as \( n \to \infty \).

Let \( F_n \) denote the CDF of \( U_n / n \). Then for \( x \in [0, \infty) \) \[ F_n(x) = \P\left(\frac{U_n}{n} \le x\right) = \P(U_n \le n x) = \P\left(U_n \le \lfloor n x \rfloor\right) = 1 - \left(1 - p_n\right)^{\lfloor n x \rfloor} \] But by a famous limit from calculus, \( \left(1 - p_n\right)^n = \left(1 - \frac{n p_n}{n}\right)^n \to e^{-r} \) as \( n \to \infty \), and hence \( \left(1 - p_n\right)^{n x} \to e^{-r x} \) as \( n \to \infty \). But by definition, \( \lfloor n x \rfloor \le n x \lt \lfloor n x \rfloor + 1\) or equivalently, \( n x - 1 \lt \lfloor n x \rfloor \le n x \) so it follows that \( \left(1 - p_n \right)^{\lfloor n x \rfloor} \to e^{- r x} \) as \( n \to \infty \). Hence \( F_n(x) \to 1 - e^{-r x} \) as \( n \to \infty \), which is the CDF of the exponential distribution.

Note that the condition \( n p_n \to r \) as \( n \to \infty \) in is the same condition required for the convergence of the binomial distribution to the Poisson.

The following result explores a connection between the binomial distribution studied in the last section and the geometric distribution studied in this section.

Suppose that \(\bs X = (X_1, X_2, \ldots)\) is a sequence of Bernoulli trials with success parameter \(p \in (0, 1)\), so that \(Y_n = \sum_{i = 1}^n X_i\) has the binomial distribution with parameters \(n\) and \(p\) for each \(n \in \N\). Now suppose that \(N\) has the geometric distribution on \(\N\) with success parameter \(q \in (0, 1)\) and that \(N\) is independent of \(\bs X\). Then \(Y_N\) has the geometric distribution on \(\N\) with success parameter \(q / (p + q - p q)\).

As is often the case, there are both analytic and probabilistic proofs. We start with the analytic proof, which of course involves conditioning on \(N\): \[\P(Y_N = k) = \sum_{n = k}^\infty \P(Y_N = k \mid N = n) \P(N = n) = \sum_{n = k}^\infty \binom{n}{k} p^k (1 - p)^{n - k} (1 - q)^n q = [p (1 - q)]^k q \sum_{n = k}^\infty \binom{n}{k} [(1 - p)(1 - q)]^{n - k}, \quad k \in \N\] Using derivatives of the geometic series for the last sum we have \[\P(Y_N = k) = [p (1 - q)]^k q \frac{1}{[1 - (1 - p)(1 - q)]^{k + 1}} = \frac{q}{p + q - p q} \left(\frac{p - p q}{p + q - p q}\right)^k, \quad k \in \N\]

For the probabilistic proof, suppose that \(\bs W = (W_1, W_2, \ldots)\) is a sequence of Bernoulli trials with success probability \(q\), and independent of \(\bs X\). Of course, for \(n \in \N\) we can interpret \(Y_n\) as the number of successes in the \(\bs X\) sequence in the first \(n\) trials. But now, we can also interpret \(N\) as the number of failures before the first success in the \(\bs W\) sequence. So \(Y_N\) is the number of successes in the \(\bs X\) sequence before the first success in the \(\bs W\) sequence. Equivalently, \(Y_N\) is the number of failures before the first success in a sequence of Bernoulli trials where success on trial \(j \in \N_+\) is \[\P(W_j = 1 \mid X_j = 1 \text{ or } W_j = 1) = \frac{q}{p + q - p q}\]

We will see a similar relationship between the exponential distribution and the Poisson distribution in the chapter on Poisson process.

The geometric distribution on \( \N \) is an infinitely divisible distribution and is a compound Poisson distribution. For the details, visit these individual sections and see the next section on the negative binomial distribution.

A standard, fair die is thrown until an ace occurs. Let \(N\) denote the number of throws. Find each of the following:

A type of missile has failure probability 0.02. Let \(N\) denote the number of launches before the first failure. Find each of the following:

A student takes a multiple choice test with 10 questions, each with 5 choices (only one correct). The student blindly guesses and gets one question correct. Find the probability that the correct question was one of the first 4.

0.4

Recall that an American roulette wheel has 38 slots: 18 are red, 18 are black, and 2 are green. Suppose that you observe red or green on 10 consecutive spins. Give the conditional distribution of the number of additional spins needed for black to occur.

Geometric on \(\N_+\) with \(p = \frac{18}{38}\)

The game of roulette is studied in more detail in the chapter on games of chance.

In the negative binomial experiment, set \(k = 1\) to get the geometric distribution and set \(p = 0.3\). Run the experiment 1000 times. Compute the appropriate relative frequencies and empirically investigate the memoryless property \[ \P(V \gt 5 \mid V \gt 2) = \P(V \gt 3) \]

We will now explore a gambling situation, known as the Petersburg problem, which leads to some famous and surprising results.

Suppose that we are betting on a sequence of Bernoulli trials with success parameter \(p \in (0, 1)\). We can bet any amount of money on a trial at even stakes: if the trial results in success, we receive that amount, and if the trial results in failure, we must pay that amount. We will use the following strategy, known as a martingale strategy:

Let \( N \) denote the number of trials played, so that \( N \) has the geometric distribution on \(\N_+\) with parameter \( p \), and let \( W \) denote our net winnings when we stop.

\(W = c\)

The first win occurs on trial \(N\), so the initial bet was doubled \(N - 1\) times. The net winnings are \[W = -c \sum_{i=0}^{N-2} 2^i + c 2^{N-1} = c\left(1 - 2^{N-1} + 2^{N-1}\right) = c\]

So \(W\) is not random and \(W\) is independent of \(p\)! Since \(c\) is an arbitrary constant, it would appear that we have an ideal strategy. However, let us study the amount of money \(Z\) needed to play the strategy.

\(Z = c (2^N - 1)\)

The expected amount of money needed for the martingale strategy is \[ \E(Z) = \begin{cases} \frac{c}{2 p - 1}, & p \gt \frac{1}{2} \\ \infty, & p \le \frac{1}{2} \end{cases} \]

So the strategy is fatally flawed when the trials are unfavorable and even when they are fair, since we need infinite expected capital to make the strategy work in these cases.

Compute \(\E(Z)\) explicitly if \(c = 100\) and \(p = 0.55\).

$1000

In the negative binomial experiment, set \(k = 1\). For each of the following values of \(p\), run the experiment 100 times. For each run compute \(Z\) (with \(c = 1\)). Find the average value of \(Z\) over the 100 runs:

For more information about gambling strategies, see the section on red and black and the chpater on martingales.

A coin has probability of heads \(p \in (0, 1)\). There are \(n \in \N_+\) players who take turns tossing the coin in round-robin style: player 1 first, then player 2, continuing until player \(n\), then player 1 again, and so forth. The first player to toss heads wins the game.

Let \(N\) denote the number of the first toss that results in heads. Of course, \(N\) has the geometric distribution on \(\N_+\) with parameter \(p\). Additionally, let \(R\) denote the number of complete tail rounds played (all \(n\) players toss tails) and let \(W\) denote the winner of the game. So \(R\) takes values in \(\N\) and \(W\) in the set \(\{1, 2, \ldots, n\}\). We are interested in the joint distribution of \((R, W)\).

Random variables \(N\), \(R\) and \(W\) are related by \(N = n R + W\) and so \((R, W)\) has joint probability density function given by \[\P(R = k, W = i) = (1 - p)^{n k + i - 1} p, \quad k \in \N_+, \, i \in \{1, 2, \ldots, n\}\]

Clearly if there are \(k \in \N\) complete tail rounds and then player \(i \in \{1, 2, \ldots, n\}\) tosses heads, then the trial number of the first head is \(k n + i\). Conversely if the trial number of the first head is \(m \in \N_+\) then there are \(m - 1\) initial tails and so the number of complete tail rounds is \(k = \lfloor (m - 1) / n \rfloor\) and then the winning player is \(i = m - k n\). That is, \(R = \lfloor (N - 1) / n \rfloor\) and \(W = N - R n\). The result then follows since \(N\) has the geoemtric distribution with parameter \(p\).

Random variables \(R\) and \(W\) are independent.

The independence of \(R\) and \(W\) follows from and the factoring theorem since the joint density is a product of a function of \(k\) and a function of \(i\). Explicitly, we can rewrite the joint density function in in the form \[\P(R = k, W = i) = [(1 - p)^n]^k [1 - (1 - p)^n] \frac{(1 - p)^{i - 1} p}{1 - (1 - p)^n}, \quad k \in \N_+, i \in \{1, 2, \ldots, n\}\]

Open the alternating coin experiment. Vary the parameters and note the shape and location of the probability density functions. For selected values of the parameters, run the simulation 1000 times and compare the relative frequency functions to the probability density functions.

Describe the distributions of \(R\) and \(W\) when the coin is fair so that \(p = 1 / 2\).

The number of rounds \(R\) has the geometric distribution on \(\N\) with parameter \(1 - (1 / 2)^n\). The winning player \(W\) has probability density function given by \[\P(W = i) = \frac{2^{n - i}}{2^n - 1}, \quad i \in \{1, 2, \ldots, n\}\]

Describe the distributions of \(R\) and \(W\) with two players, so that \(n = 2\).

The number of rounds \(R\) has the geometric distribution on \(\N\) with parameter \(p (2 - p)\). The winning player \(W\) has probability density function given by \[\P(W = 1) = \frac{1}{2 - p}, \quad \P(W = 2) = \frac{1 - p}{2 - p}\]

Combining and , we have two players with a fair coin. The number of rounds \(R\) has the geometric distribution on \(\N\) with parameter \(3 / 4\). Player 1 wins with probability \(2 / 3\) while player 2 wins with probability \(1 / 3\).

Let's return to the case of general \(n \in \N_+\) and \(p \in (0, 1)\). Since \(R\) has a geometric distribution on \(\N\), all of the basic theory above applies, with \(p\) replaced by \(1 - (1 - p)^n\). So for most of the remainder of this subsection, we will concentrate on random variable \(W\), the winning player.

\(\P(W = i) = (1 - p)^{i - 1} \P(W = 1)\) for \(i \in \{1, 2, \ldots, n\}\).

This result can be argued directly, using the memoryless property of the geometric distribution in . In order for player \(i\) to win, the previous \(i - 1\) players must first all toss tails. Then, player \(i\) effectively becomes the first player in a new sequence of tosses. This result can be used to give another derivation of the probability density function in .

Note that \(\P(W = i)\) is a decreasing function of \(i \in \{1, 2, \ldots, n\}\). Not surprisingly, the lower the toss order the better for the player. Note also from that \(W\) itself has a truncated geometric distribution.

The distribution of \(W\) is the same as the conditional distribution of \(N\) given \(N \le n\): \[ \P(W = i) = \P(N = i \mid N \le n), \quad i \in \{1, 2, \ldots, n\} \]

Our next results concern the moments of \(W\).

The probability generating function of \(W\) is given by \[ \E\left(t^W\right) = \frac{p t}{1 - t (1 - p)} \frac{1 - [t (1 - p)]^n}{1 - (1 - p)^n}, \quad |t| \lt \frac{1}{1 - p}\]

The result can be obtained by direct computation and standard results for geometric series. But an easier and better method is to use , the independence of \(R\) and \(W\), and the moment generating functions of \(N\) and \(R\): \[E\left(t^N\right) = \E\left(t^{n R + W}\right) = \E\left[\left(t^n\right)^R\right] \E\left(t^W\right)\] But \begin{align*} \E\left(t^N\right) & = \frac{p t}{1 - (1 - p) t}, \quad |t| \lt \frac{1}{1 - p} \\ \E\left[\left(t^n\right)^R\right] & = \frac{1 - (1 - p)^n}{1 - (1 - p)^n t^n}, \quad |t| \lt \frac{1}{(1 - p)^n} \end{align*}

The mean and variance of \(W\) are

The results can be obtained from the definitions of mean and variance and derivatives of geometric series. The results can also be obtained by taking derivatives of the probability generating function in . For both methods the computations are extremely tedious. But the computations are easy using , the independence of \(R\) and \(W\), and the corresponding moments of \(R\) and \(N\).

Open the alternating coin experiment. Vary the parameters and note the shape and location of the mean\(\pm\)standard deviation bars. For selected values of the parameters, run the simulation 1000 times and compare the empirical means and standard deviations to the corresponding distribution values.

The following result explore some limiting distributions related to the alternating coin-tossing game.

Limiting distributions:

Players at the end of the tossing order should hope for a coin biased towards tails.

Open the alternating coin experiment. Although the parameter values are limited, you can beginn to see the convergence results in .

In the game of odd man out, we start with a specified number of players, each with a coin that has the same probability of heads. The players toss their coins at the same time. If there is an odd man, that is a player with an outcome different than all of the other players, then the odd player is eliminated; otherwise no player is eliminated. In any event, the remaining players continue the game in the same manner. A slight technical problem arises with just two players, since different outcomes would make both players odd

. So in this case, we might (arbitrarily) make the player with tails the odd man.

Suppose there are \(k \in \{2, 3, \ldots\}\) players and \(p \in [0, 1]\). In a single round, the probability of an odd man is \[r_k(p) = \begin{cases} 2 p (1 - p), & k = 2 \\ k p (1 - p)^{k-1} + k p^{k-1} (1 - p), & k \in \{3, 4, \ldots\} \end{cases}\]

Let \(Y\) denote the number of heads. If \(k = 2\), the event that there is an odd man is \(\{Y = 1\}\). If \(k \ge 3\), the event that there is an odd man is \(\{Y \in \{1, k - 1\}\}\). The result now follows since \(Y\) has a binomial distribution with parameters \( k \) and \( p \).

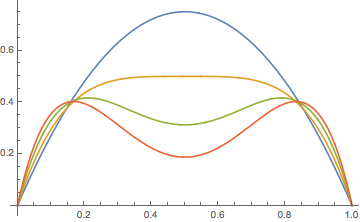

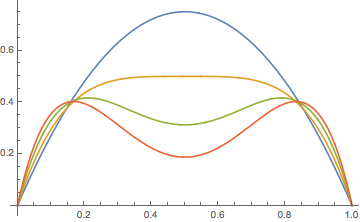

The graph of \(r_k\) is more interesting than you might think.

For \( k \in \{2, 3, \ldots\} \), \(r_k\) has the following properties:

These properties are clear from the functional form of \( r_k(p) \). Note that \( r_k(p) = r_k(1 - p) \).

For \( k \in \{2, 3, 4\} \), \(r_k\) has the following properties:

This follows by computing the first derivatives: \(r_2^\prime(p) = 2 (1 - 2 p)\), \(r_3^\prime(p) = 3 (1 - 2 p)\), \(r_4^\prime(p) = 4 (1 - 2 p)^3\), and the second derivatives: \( r_2^{\prime\prime}(p) = -4 \), \( r_3^{\prime\prime}(p) = - 6 \), \( r_4^{\prime\prime}(p) = -24 (1 - 2 p)^2 \).

For \( k \in \{5, 6, \ldots\} \), \(r_k\) has the following properties:

Note that \(r_k(p) = s_k(p) + s_k(1 - p)\) where \(s_k(t) = k t^{k-1}(1 - t)\) for \(t \in [0, 1]\). Also, \(p \mapsto s_k(p)\) is the dominant term when \(p \gt \frac{1}{2}\) while \(p \mapsto s_k(1 - p)\) is the dominant term when \(p \lt \frac{1}{2}\). A simple analysis of the derivative shows that \(s_k\) increases and then decreases, reaching its maximum at \((k - 1) / k\). Moreover, the maximum value is \(s_k\left[(k - 1) / k\right] = (1 - 1 / k)^{k-1} \to e^{-1}\) as \(k \to \infty\). Also, \( s_k \) is concave upward and then downward, with inflection point at \( (k - 2) / k \).

Suppose \(p \in (0, 1)\), and let \(N_k\) denote the number of rounds until an odd man is eliminated, starting with \(k\) players. Then \(N_k\) has the geometric distribution on \(\N_+\) with parameter \(r_k(p)\). The mean and variance are

As we might expect, \(\mu_k(p) \to \infty\) and \(\sigma_k^2(p) \to \infty\) as \(k \to \infty\) for fixed \(p \in (0, 1)\). On the other hand, from , \(\mu_k(p_k) \to e\) and \(\sigma_k^2(p_k) \to e^2 - e\) as \(k \to \infty\).

Suppose we start with \(k \in \{2, 3, \ldots\}\) players and \(p \in (0, 1)\). The number of rounds until a single player remains is \(M_k = \sum_{j = 2}^k N_j\) where \((N_2, N_3, \ldots, N_k)\) are independent and \(N_j\) has the geometric distribution on \(\N_+\) with parameter \(r_j(p)\). The mean and variance are

The form of \(M_k\) follows from : \(N_k\) is the number of rounds until the first player is eliminated. Then the game continues independently with \(k - 1\) players, so \(N_{k-1}\) is the number of additional rounds until the second player is eliminated, and so forth. Parts (a) and (b) follow from and standard properties of expected value and variance.

Starting with \(k\) players and probability of heads \(p \in (0, 1)\), the total number of coin tosses is \(T_k = \sum_{j=2}^k j N_j\). The mean and variance are

As before, the form of \(M_k\) follows from : \(N_k\) is the number of rounds until the first player is eliminated, and each these rounds has \(k\) tosses. Then the game continues independently with \(k - 1\) players, so \(N_{k-1}\) is the number of additional rounds until the second player is eliminated with each round having \(k - 1\) tosses, and so forth. Parts (a) and (b) also follow from and standard properties of expected value and variance.

Consider again a sequence of Bernoulli trials \( \bs X = (X_1, X_2, \ldots) \) with success parameter \( p \in (0, 1) \). Recall that the number of trials \( M \) before the first success (outcome 1) occurs has the geometric distribution on \( \N \) with parameter \( p \). A natural generalization is the random variable that gives the number of trials before a specific finite sequence of outcomes occurs for the first time. (Such a sequence is sometimes referred to as a word from the alphabet \( \{0, 1\} \) or simply a bit string). In general, finding the distribution of this variable is a difficult problem, with the difficulty depending very much on the nature of the word. The problem of finding just the expected number of trials before a word occurs can be solved using powerful tools from the theory of renewal processes and from the theory of martingalges.

To set up the notation, let \( \bs x \) denote a finite bit string and let \( M_{\bs x} \) denote the number of trials before \( \bs x \) occurs for the first time. Finally, let \( q = 1 - p \). Note that \( M_{\bs x} \) takes values in \( \N \). In the following exercises, we will consider \( \bs x = 10 \), a success followed by a failure. As always, try to derive the results yourself before expanding the details.

The probability density function \( f_{10} \) of \( M_{10} \) is given as follows:

For \( n \in \N \), the event \(\{M_{10} = n\}\) can only occur if there is an initial string of 0s of length \( k \in \{0, 1, \ldots, n\} \) followed by a string of 1s of length \( n - k \) and then 1 on trial \( n + 1 \) and 0 on trial \( n + 2 \). Hence \[ f_{10}(n) = \P(M_{10} = n) = \sum_{k=0}^n q^k p^{n - k} p q, \quad n \in \N \] The stated result then follows from standard results on geometric series.

It's interesting to note that \( f \) is symmetric in \( p \) and \( q \), that is, symmetric about \( p = \frac{1}{2} \). It follows that the distribution function, probability generating function, expected value), and variance, which we consider below, are all also symmetric about \( p = \frac{1}{2} \). It's also interesting to note that \( f_{10}(0) = f_{10}(1) = p q \), and this is the largest value. So regardless of \( p \in (0, 1) \) the distribution is bimodal with modes 0 and 1.

The distribution function \( F_{10} \) of \( M_{10} \) is given as follows:

The probability generating function \( P_{10} \) of \( M_{10} \) is given as follows:

By definition, \[ P_{10}(t) = \E\left(t^{M_{10}}\right) = \sum_{n=0}^\infty f_{10}(n) t^n \] for all \( t \in \R \) for which the series converges absolutely. The stated result then follows from the theorem above, and once again, standard results on geometric series.

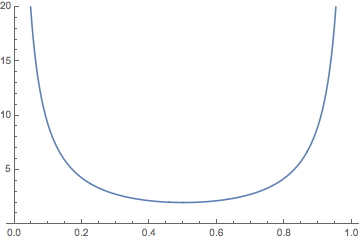

The mean of \( M_{10} \) is given as follows:

The graph of \( \E(M_{10}) \) as a function of \( p \in (0, 1) \) is given below. It's not surprising that \( \E(M_{10}) \to \infty \) as \( p \downarrow 0 \) and as \( p \uparrow 1 \), and that the minimum value occurs when \( p = \frac{1}{2} \).

The variance of \( M_{10} \) is given as follows:

Let \(P\) denote the probability generating function in . Recall that \( P^{\prime \prime}_{10}(1) = \E[M_{10}(M_{10} - 1)] \), the second factorial moment, and so \[ \var(M_{10}) = P^{\prime \prime}_{10}(1) + P^\prime_{10}(1) - [P^\prime_{10}(1)]^2 \] The stated result then follows from calculus.